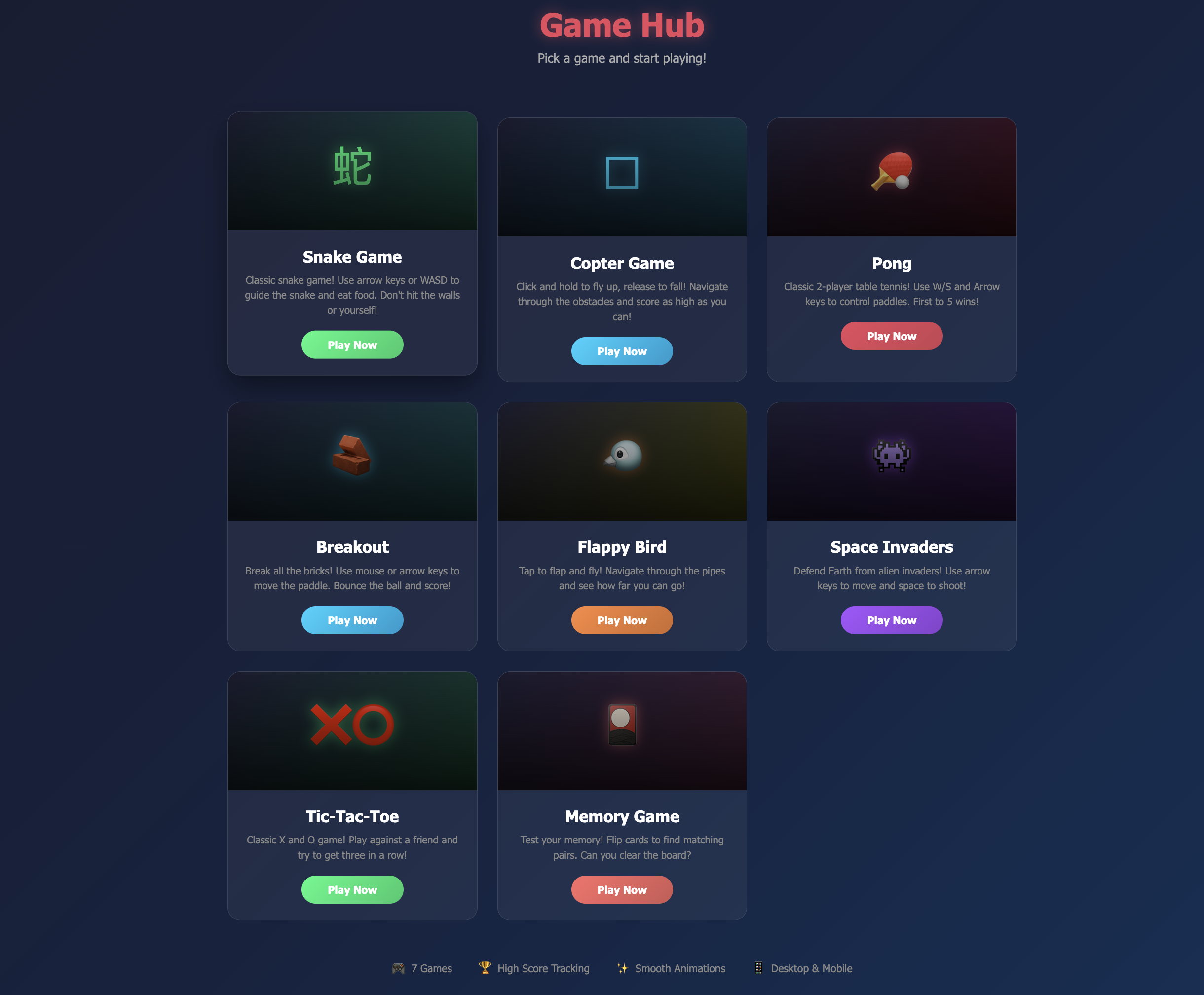

GameHub is a collection of 10 classic browser games (Snake, Pong, Tic Tac Toe, Memory, Breakout, Flappy, 2048, Sudoku, Copter, and Space Invaders) built entirely with AI pair programming assistance using Claude Code and Qwen. This project explores how AI can accelerate game development while maintaining code quality and learning value.

The Goal

I wanted to create a portfolio piece that demonstrates:

- Classic game development fundamentals

- AI pair programming capabilities

- Clean, maintainable vanilla JavaScript code

- Fun, interactive projects that showcase technical skills

AI Tools Used

- Claude Code: Primary game logic, collision detection, game loop architecture

- Qwen: Canvas rendering optimization, physics calculations, UI responsiveness

How AI Helped

Code Generation

Claude helped me create a clean, reusable game loop pattern:

class Game {

constructor(canvas, ctx) {

this.canvas = canvas;

this.ctx = ctx;

this.running = false;

this.lastTime = 0;

}

start() {

this.running = true;

this.lastTime = performance.now();

this.loop();

}

loop(timestamp = 0) {

if (!this.running) return;

const deltaTime = (timestamp - this.lastTime) / 1000;

this.lastTime = timestamp;

this.update(deltaTime);

this.draw();

requestAnimationFrame((t) => this.loop(t));

}

}Collision Detection

AI generated robust collision detection for multiple game types:

// AABB collision detection - works for all games

checkCollision(rect1, rect2) {

return (

rect1.x < rect2.x + rect2.width &&

rect1.x + rect1.width > rect2.x &&

rect1.y < rect2.y + rect2.height &&

rect1.y + rect1.height > rect2.y

);

}AI Opponent for Pong

Claude helped implement a minimax-based AI opponent with adjustable difficulty:

class PongAI {

constructor(difficulty = 'medium') {

this.difficulty = difficulty;

this.speed = this.getSpeed();

}

getSpeed() {

const speeds = {

easy: 0.05,

medium: 0.1,

hard: 0.15

};

return speeds[this.difficulty] || 0.1;

}

move(ballY, playerY, paddleHeight) {

const center = playerY + paddleHeight / 2;

if (Math.abs(ballY - center) > 10) {

return ballY > center

? playerY + this.speed

: playerY - this.speed;

}

return playerY;

}

}The Process

Prompting Strategy

What worked well:

- Start with high-level architecture, then iterate on details

- Ask AI to explain concepts before generating code

- Request multiple implementations to compare approaches

- Use AI as a code reviewer, not just a generator

Human Decisions

AI couldn't decide:

- Game balance (difficulty curves, scoring)

- Visual design choices (colors, styling)

- Which features to prioritize

- When to stop iterating and ship

Challenges

What AI Couldn't Help With

- Visual design: I had to create all game sprites and styling

- Game feel: Tuning the "juice" (screen shake, particles) required human intuition

- Accessibility: Keyboard navigation improvements needed manual testing

Results

Quantitative

- Development time saved: ~40% compared to traditional development

- Number of iterations: 15-20 per game on average

- AI-generated code: ~60% of total codebase

Qualitative

- Clean, well-structured code with AI guidance

- Learned new patterns for game development

- Discovered AI's strengths (boilerplate, algorithms) and weaknesses (design, UX)

Key Learnings

- AI excels at algorithms - Minimax, collision detection, pathfinding

- Human needed for design - Visuals, feel, user experience

- Iterative prompting works best - Start broad, refine details

- AI as pair programmer, not replacement - Review and understand all code

- Documentation matters - AI helps write docs, but you need to know what to ask for